Computer Science student building ML systems at the intersection of inference, agentic AI, and quantitative domains. Experienced in FastAPI backends, PyTorch and TensorFlow model development, and LangGraph-based agentic architectures—from end-to-end RAG pipelines to GPU-accelerated inference orchestration. Strong foundation in statistical modeling and signal processing, with hands-on experience in data pipeline engineering for large-scale scientific datasets.

View ProjectsI'm pursuing a B.S. in Computer Science at Georgia State University with a focus on full-stack engineering—combining backend development, machine learning, and systems design to build reliable, scalable applications.

My experience spans the entire stack: FastAPI backends, PostgreSQL and MongoDB databases, PyTorch and TensorFlow model development, data pipeline engineering, RAG systems, and LangGraph agentic architectures. I've worked on everything from real-time inference orchestration on Modal to building multi-layer data processing systems that handle messy scientific datasets at scale.

I'm interested in problems that require thoughtful engineering across layers—where backend architecture, ML model design, and infrastructure decisions all matter. That includes deep-tech and quantitative domains, but I'm equally engaged in building systems that work: reliable APIs, efficient data pipelines, production-grade model serving, and the infrastructure that makes it all reliable.

I believe the best engineers understand both breadth and depth—knowing how systems fit together while developing genuine expertise in specific areas. That's what drives my work.

Exploring deep learning architectures for zero-shot and weak supervision methods. Building neural network models using TensorFlow and NumPy, with hands-on work in CNN-based spatial data processing and RNN-based sequence modeling. Focused on scalable learning workflows that generalize with minimal labeled data.

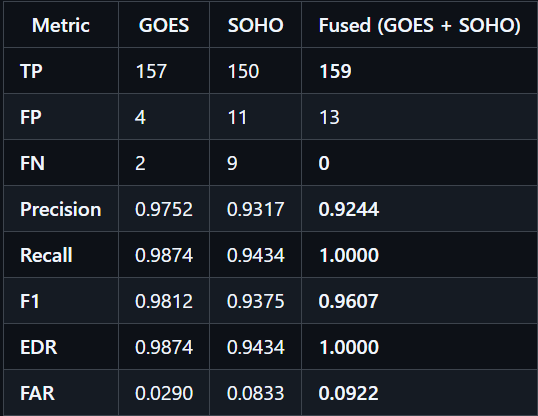

Built an end-to-end data pipeline to detect solar energetic particle events from 30 years of satellite data across multiple missions. Engineered data normalization layers, implemented detection algorithms with 100% recall and 92.4% precision, and debugged critical inconsistencies in NASA's legacy datasets. The work bridges data engineering, signal processing, and domain expertise—turning raw telemetry into actionable scientific insights.

Presidential Scholar on a full-ride merit scholarship. Focused on computer science, machine learning, mathematics, and research-driven AI systems for scientific and quantitative domains.

My latest contributions and coding activity.

Some of the things I've built.

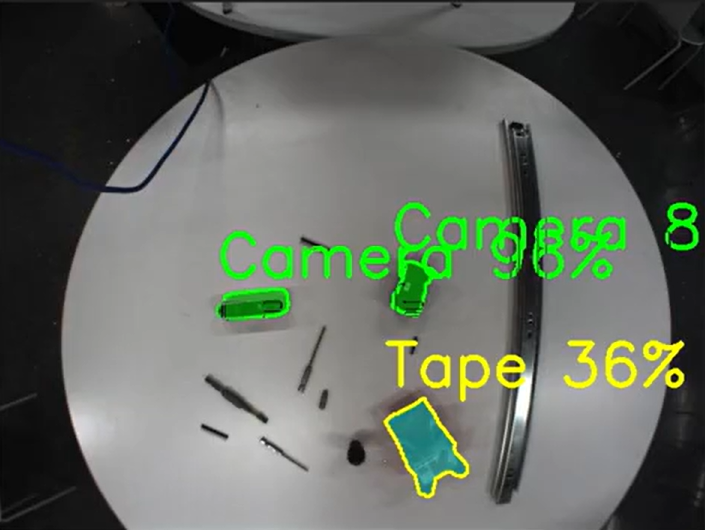

A multimodal AI system enabling voice-driven object search in cluttered workspaces. Designed a GPU-accelerated inference pipeline on Modal orchestrating speech transcription, semantic query mapping, and parallel vision models (YOLO custom detection + SAM2 segmentation). Achieved 87% mAP@50 with 88.4% precision and 82.6% recall, returning pixel-precise masks and spatial coordinates for real-time object highlighting.

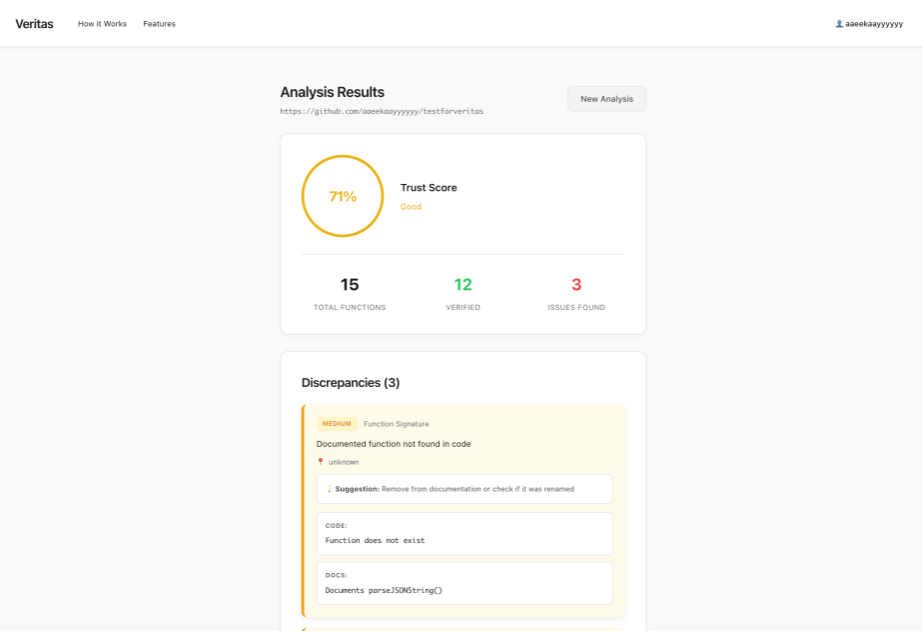

An AI-powered GitHub App that automatically detects when code changes don't match documentation. Built a 3-layer hybrid system with multi-language code parsing (Python AST, JavaScript/TypeScript regex), semantic routing via embeddings, and LLM comparison logic. Implemented adaptive routing that reduced LLM cost by 88% while maintaining 92% accuracy. Creates automated GitHub issues to keep docs and code in sync.

A production data pipeline for detecting Solar Energetic Particle events from 30 years of multi-source satellite data (GOES, SOHO). Engineered instrument-specific adapters, satellite-year fallback logic, and dynamic version discovery across 60+ monthly files. Built Python-based data normalization deriving integral proton flux across 3 GOES generations. Achieved 100% recall (159/159 validated events) and 92.4% precision through rigorous algorithm tuning and ground-truth artifact debugging.

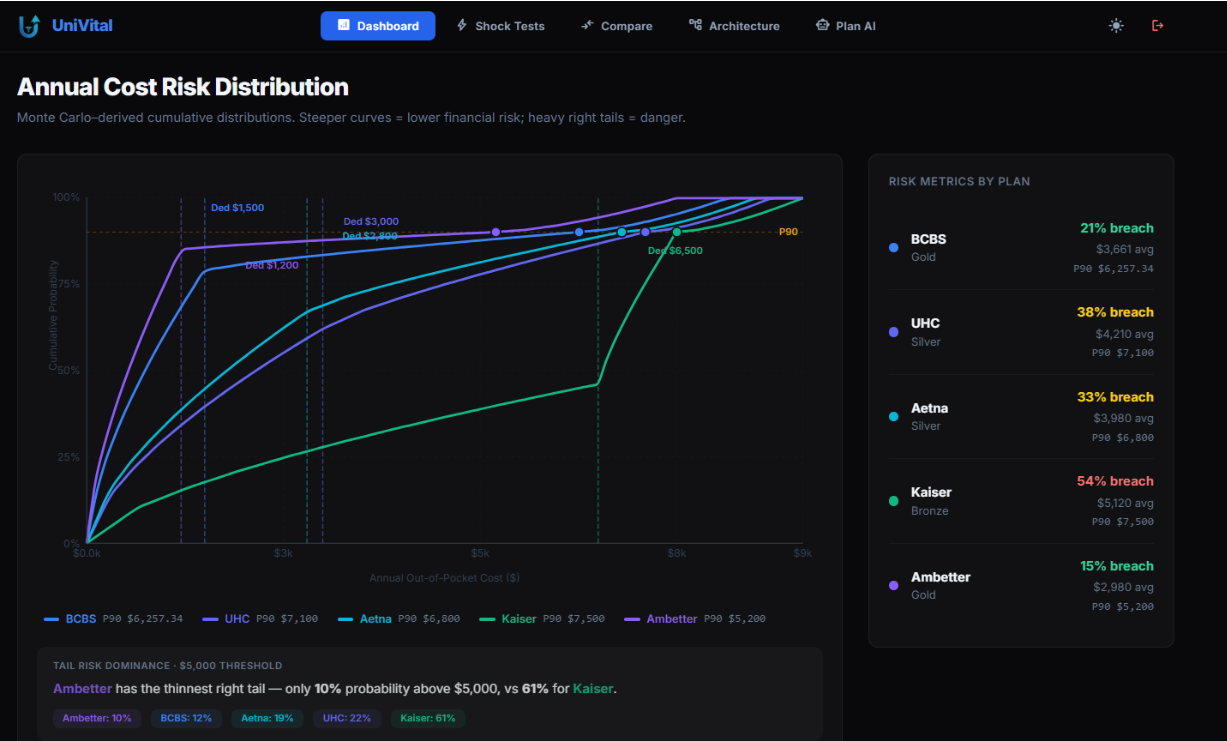

A quantitative risk modeling system for student health insurance comparison. Built a FastAPI backend with Databricks Lakehouse architecture (Bronze/Silver/Gold layers) computing premium fragility curves, subsidy cliff proximity, deductible breach probability via Monte Carlo simulation (10,000 paths), and shock stress-testing. Integrated Gemini embeddings + Actian VectorAI for RAG-based plan intelligence. Deployed risk metrics comparable to portfolio analytics in quantitative finance.

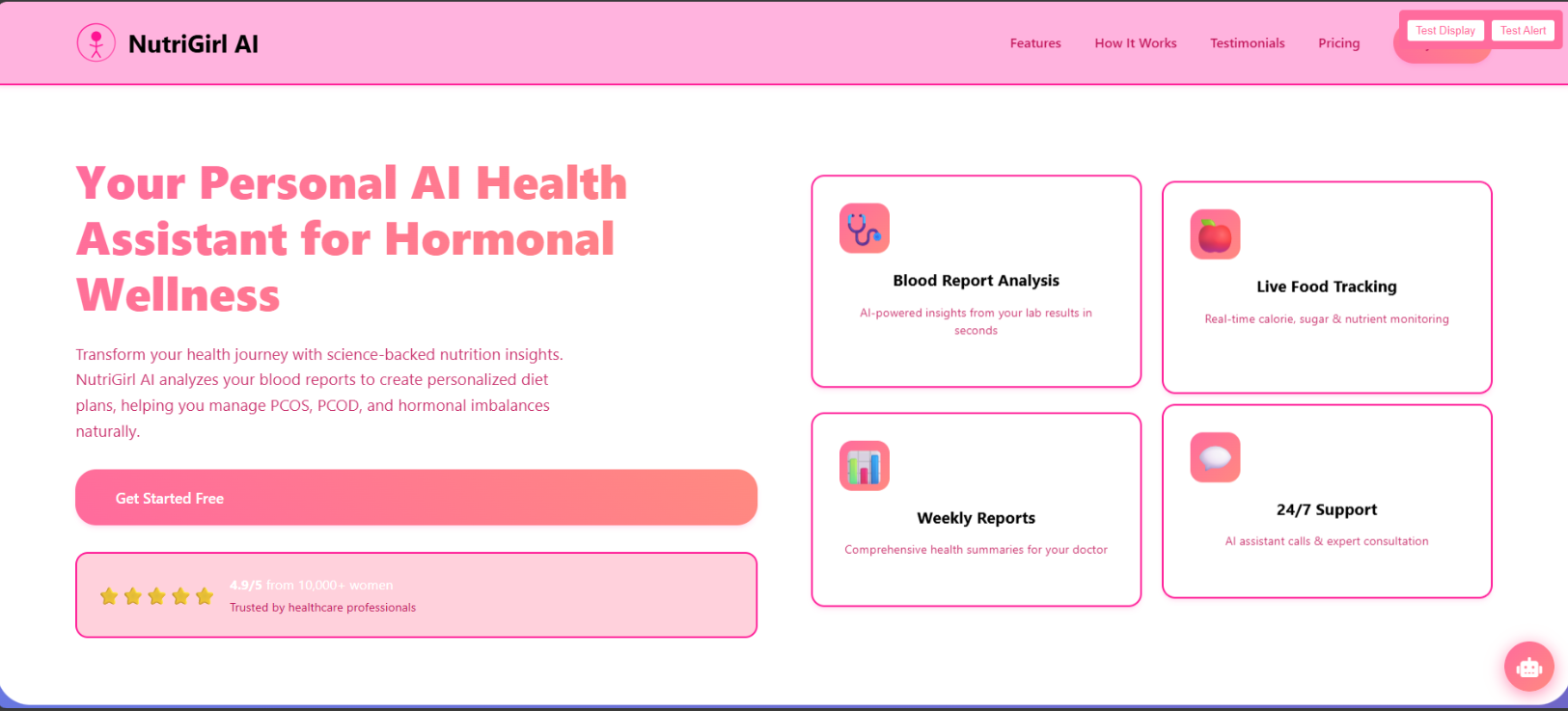

2nd Overall, 2nd Health Track at HackHer GSU 2025. An AI-powered platform combining lab report interpretation, nutrition tracking, and pose-aware workout coaching for women managing PCOS/PCOD. Led backend and computer vision development, building a smart lab analyzer for blood report summaries and a GPT-Vision–based pose detection system using Google MediaPipe for real-time form correction. Integrated OpenAI APIs, Web Speech API, and Node.js/Express.js to enable voice-guided feedback and a 24/7 PCOS-specific chatbot.

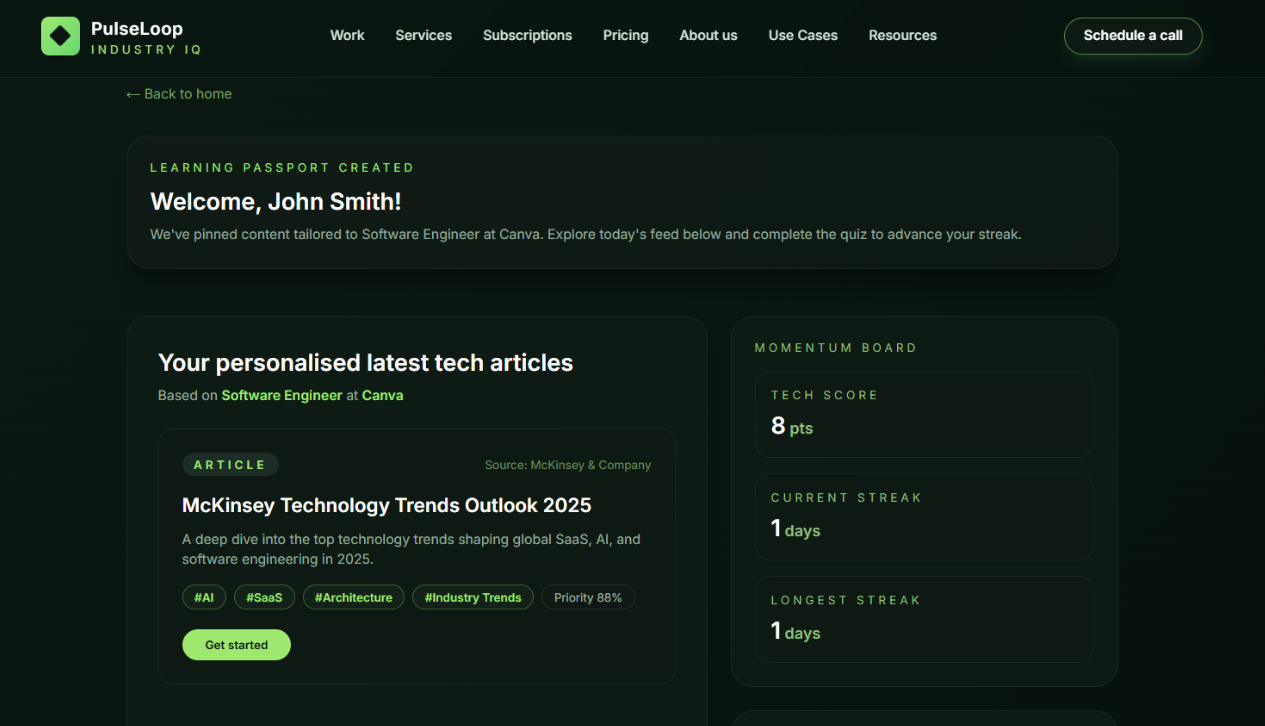

An enterprise SaaS platform automating industry awareness through AI-curated content and adaptive learning. Built FastAPI + MongoDB + React stack with role-aware content pipelines processing 4–6 articles/podcasts per user. Integrated DeepSeek-V3.1 for adaptive quiz generation—when users miss 3+ questions, AI identifies weak concepts and generates targeted follow-up questions with specific timestamps for focused review. Automated content ingestion via Azure Functions with MongoDB transactions for consistency.

Let's discuss your next project or just say hello!

I'm always open to discussing new opportunities, interesting projects, or just having a chat about technology and development.

Message me

Use the secure form on the right — I usually reply within 48 hours.

Location

Atlanta, Georgia

Response Time

Within 48 hours

I'm open to collaborations on interesting projects, research ideas, and deep-tech initiatives. Whether it's exploring inference optimization, building agentic systems, or tackling quantitative problems—let's talk.

The best way to reach me is through the contact form above.